How Tech Companies Build Modern Performance Management Systems

.svg)

.svg)

Performance management in technology companies operates at a speed unlike any other industry. Fast-moving product cycles, rapid skill shifts, and distributed teams create an environment where traditional annual reviews simply can't keep pace with the work itself.

Technology organizations depend on structured performance systems to maintain competitive advantage in an industry where talent makes the difference between market leadership and irrelevance.

Clear performance objectives help engineering teams ship features faster. When developers understand which technical debt to prioritize and which features drive business value, they make better daily decisions without waiting for direction. Companies with structured goal frameworks report higher deployment frequency and shorter lead times for changes.

Tech professionals have options. Organizations with transparent career progression and regular feedback retain senior engineers at significantly higher rates than those relying on opaque advancement criteria. Developers want to know what skills will accelerate their growth and which projects will expand their capabilities.

Performance systems that measure the right outcomes create accountability for technical excellence. When teams have clear expectations around test coverage, incident response times, and architecture decisions, they build more maintainable systems. This focus on quality metrics prevents the technical debt accumulation that slows companies down over time.

Tech organizations work in complex matrices where engineers collaborate with product managers, designers, and business stakeholders. Performance management creates shared language around priorities and helps distributed teams understand how their work connects to company objectives. This alignment is particularly important when teams work across time zones and rarely meet in person.

The technology landscape shifts constantly. Performance systems that incorporate learning goals and skill development ensure teams stay current. Regular competency reviews identify capability gaps before they become critical, allowing companies to upskill existing talent rather than constantly recruiting for new positions.

Technology companies face distinct obstacles that make effective performance evaluation more complex than in other industries.

The skills valuable today may be obsolete in eighteen months. Performance frameworks built around specific technologies become outdated quickly. JavaScript frameworks, cloud platforms, and development methodologies shift faster than annual review cycles. Managers struggle to evaluate work done with tools they may not fully understand themselves, especially when junior engineers adopt new approaches senior leaders haven't learned yet.

Tech teams often span continents and time zones. Managers may never see their reports' daily work firsthand. This distance makes it harder to recognize contributions, provide timely feedback, or identify when someone struggles. The shift to remote work has amplified this challenge, as casual conversations that once provided performance context no longer happen naturally. Recognition systems must work asynchronously across locations.

Modern software development is intensely collaborative. Code gets written in pair programming sessions, reviewed by multiple people, and deployed through automated systems. Attributing specific outcomes to individual contributors becomes nearly impossible. Yet performance systems still try to rate individuals when most technical work is team-based. This mismatch creates friction between how work actually happens and how it gets evaluated.

Great engineers need both technical expertise and collaboration ability. Performance evaluations often overweight coding ability while undervaluing communication, mentorship, or cross-team influence. Junior developers might write excellent code but struggle with architecture discussions. Senior engineers might guide technical direction but have smaller commit counts. Traditional metrics miss these nuances, making it hard to fairly evaluate contributors with different strengths.

Tech projects don't align with calendar years. An engineer might work on three completely different initiatives in twelve months, each requiring different skills and producing different outcomes. Annual reviews force artificial aggregation of these distinct experiences. By the time formal feedback arrives, the work being discussed is ancient history and lessons learned are no longer actionable.

Modern performance approaches help technology leaders move from backward-looking evaluation to forward-focused development conversations.

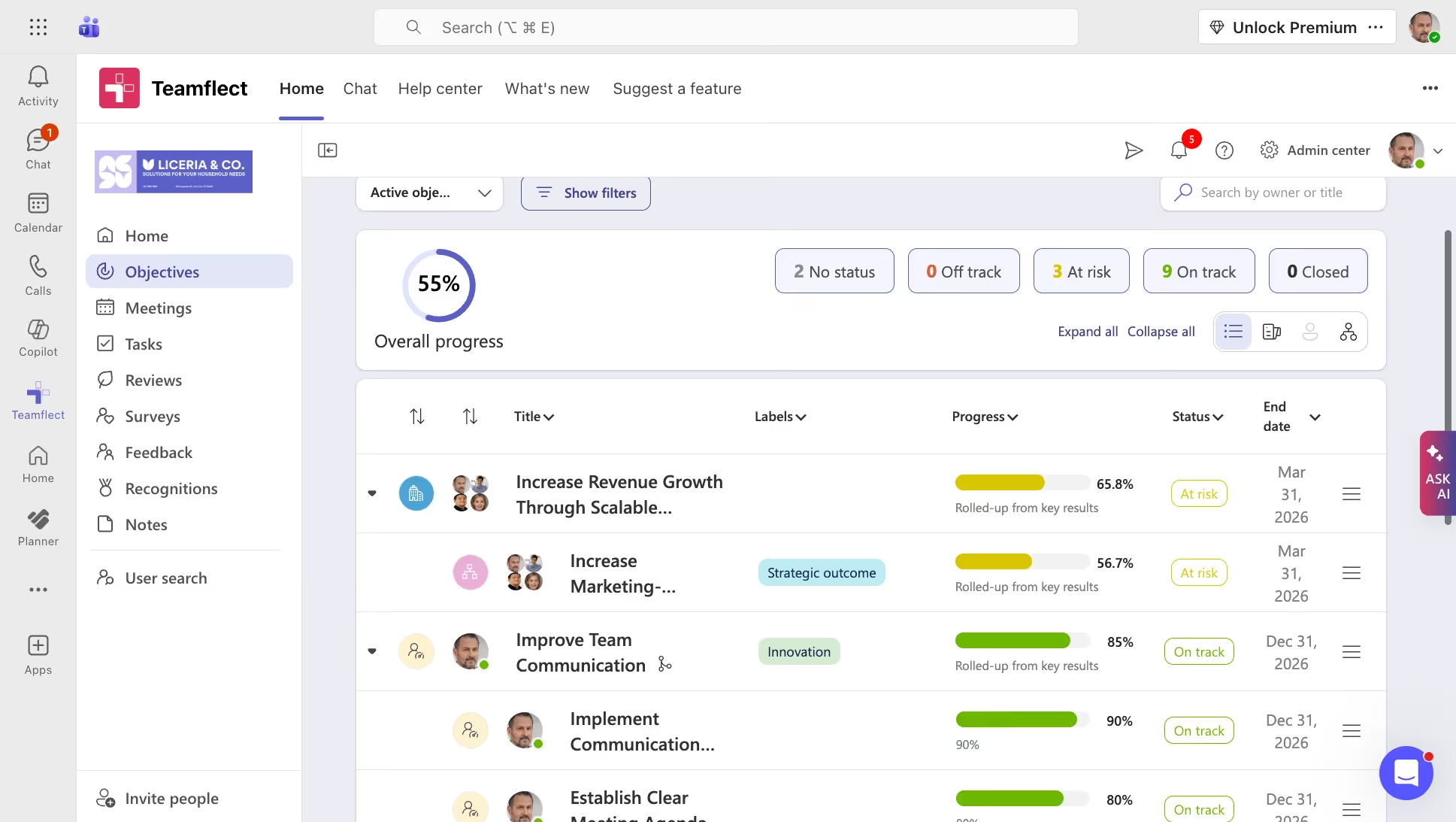

Replace annual goal setting with 90-day objective and key result frameworks. This shorter cycle matches tech project timelines and allows course correction as priorities shift. Quarterly reviews let teams respond to market changes, technology breakthroughs, or strategic pivots without waiting for year-end. OKR software built for technology teams, such as Teamflect, makes this practical by automating check-ins and progress tracking.

When implementing quarterly cycles, connect individual objectives to company goals. Developers should see how their sprint work contributes to product milestones. DevOps engineers need clarity on how their infrastructure improvements support business scale. This cascading alignment ensures daily work drives strategic outcomes.

Build feedback into the regular work rhythm rather than saving it for formal reviews. Brief weekly check-ins or end-of-sprint retrospectives create opportunities for real-time course correction. These conversations address blockers immediately rather than letting issues compound.

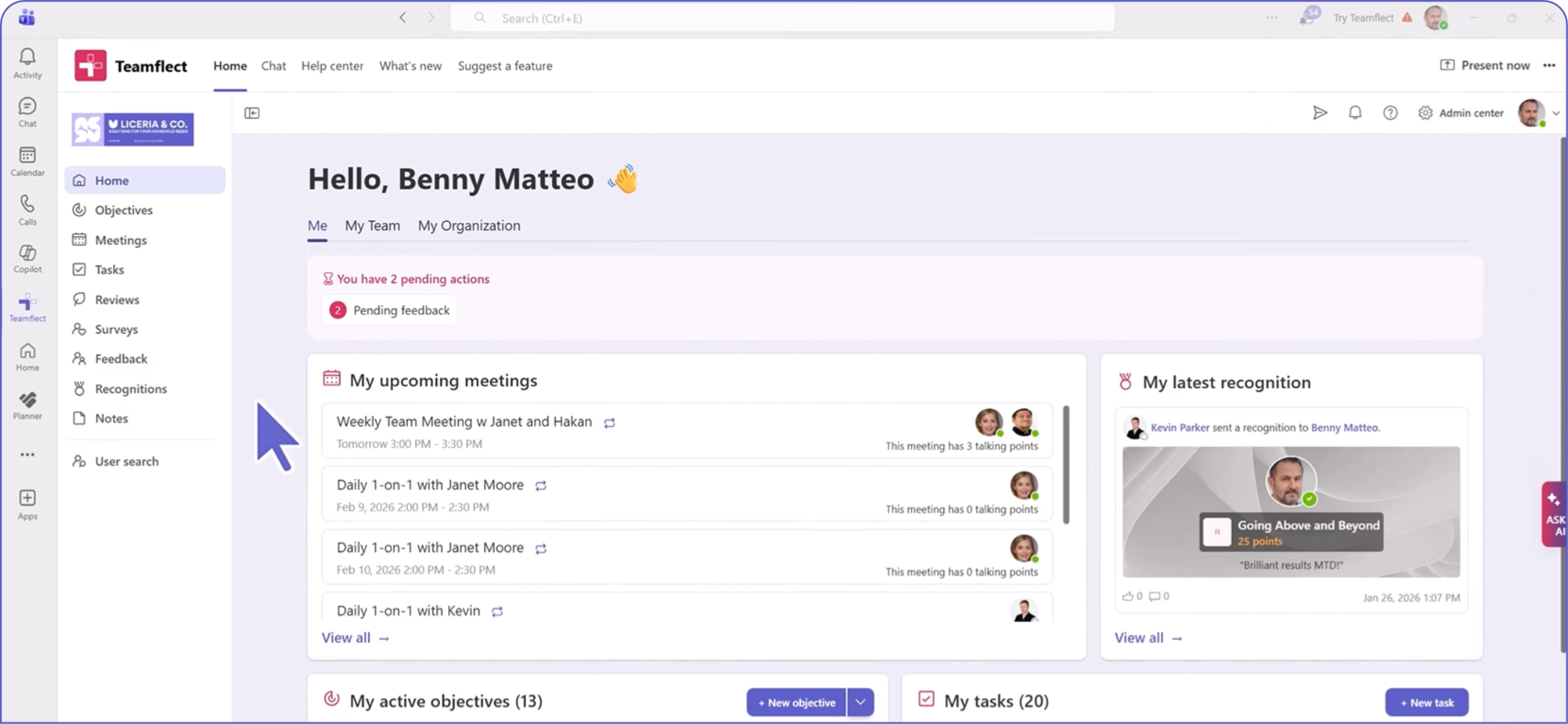

Teamflect, a performance management software with Microsoft Teams integration, makes continuous feedback practical. Managers can provide recognition or coaching in the same tool where technical conversations already happen. This reduces friction and increases the likelihood that feedback actually gets shared when it's most relevant.

Create evaluation frameworks that reflect actual job requirements. Frontend developers need different assessments than backend engineers or site reliability engineers. Security specialists have distinct performance criteria from mobile developers. Generic rubrics fail to capture what makes someone effective in their specific role.

Build competency matrices that define what good looks like at each career level for each role. Junior engineers should understand what skills will move them to mid-level. Senior developers need clarity on the leadership behaviors expected for principal engineer positions. These matrices become development roadmaps, not just evaluation tools.

Create psychological safety by decoupling growth discussions from salary reviews. When engineers know that admitting skill gaps won't immediately impact their pay, they engage more honestly about where they need support. This separation allows managers to have productive conversations about technical weaknesses or collaboration challenges without defensiveness.

Schedule OKR check-ins separately from performance ratings. Use these sessions to discuss career aspirations, technical interests, and learning goals. Save compensation conversations for their own dedicated time with clear criteria about how performance translates to pay changes.

Engineering excellence requires collaboration with product, design, and business teams. Multi-source feedback provides a complete picture of someone's impact. Product managers can speak to an engineer's customer focus. Other developers can evaluate code review quality. Designers can assess collaboration effectiveness.

Structure peer feedback around specific behaviors and outcomes rather than personality traits. Ask reviewers to cite examples of how someone influenced a project outcome or helped a teammate solve a problem. This specificity makes feedback more actionable and reduces bias.

Make skill development visible in your performance system. Track completed certifications, new languages learned, or frameworks adopted. Document contributions to open source projects or technical talks delivered. This creates a skills portfolio that shows growth over time.

Link these development activities to career progression criteria. If becoming a senior engineer requires distributed systems expertise, show which projects or learning opportunities will build that knowledge. This transparency helps developers take ownership of their advancement.

Technology performance metrics must balance individual contribution with team outcomes and technical quality with business impact.

Track how consistently teams deliver on sprint commitments. This metric reveals capacity planning effectiveness and helps identify when teams are overcommitted or sandbagging estimates. Velocity trends also show whether process improvements or technical debt work is paying off. Individual contribution should be measured through relative participation in team velocity, not isolated story point completion.

Measure how quickly team members review others' code. Fast, thorough reviews keep work moving and demonstrate collaboration commitment. Average time from review request to approval shows whether knowledge sharing happens efficiently. This KPI catches bottlenecks where senior engineers become review gatekeepers and helps balance workload across teams.

For production support roles, track the mean time to detect, acknowledge, and resolve incidents. These metrics reveal technical competence under pressure and commitment to system reliability. Include on-call participation and runbook improvement contributions. Teams with strong performance management see declining incident frequency over time as they address root causes rather than just symptoms.

Quantify work devoted to improving code quality, upgrading dependencies, or refactoring architecture. Technical debt paydown rarely gets credit in traditional performance systems despite being critical for long-term velocity. Track test coverage improvements, security vulnerability fixes, or documentation updates. This makes maintenance work visible and valued.

Measure outcomes from projects requiring collaboration between engineering, product, and design. Track whether features launched on time, met quality standards, and achieved intended business results. Include stakeholder satisfaction ratings from product managers or customer feedback scores. This KPI captures the softer skills traditional metrics miss.

Monitor how quickly engineers adopt new technologies or methodologies when business needs require it. Track completion of relevant courses, certifications earned, or mentorship of teammates in new skills. Measure time from learning to productive contribution in a new tech stack. This forward-looking metric predicts adaptability better than backward-looking assessments of past work.

Recognize knowledge sharing activities that strengthen overall team performance. Track technical documentation authored, lunch-and-learn sessions delivered, or mentoring hours invested. Measure pull requests that improve team tooling or CI/CD pipelines. These force multiplier activities often go unrecognized but have outsized impact on team effectiveness.

Well-intentioned evaluation systems often fail when they ignore how technical work actually happens.

Tech managers sometimes track lines of code written, commits made, or story points completed by individual developers. These metrics miss the collaborative reality of software development. An engineer who spends their time reviewing others' code, mentoring junior developers, or improving team tooling produces less individual output but creates more team value. Systems that only count individual contributions incentivize the wrong behaviors and penalize force multipliers.

Many performance frameworks evaluate skills that mattered five years ago but have limited relevance today. Rating someone's proficiency in technologies the company no longer uses or ignoring emerging capabilities the business will need next quarter makes evaluations feel disconnected from actual work. Competency models must update as quickly as the technology stack shifts, or they become obstacles rather than guides.

Distributed teams create visibility challenges. Engineers working from home offices in different time zones often miss informal recognition that happens in headquarters. Performance systems must intentionally surface contributions from remote workers. Without deliberate inclusion, recognition flows disproportionately to whoever sits near leadership, creating retention problems among your best remote talent.

Treating formal reviews as separate from ongoing development discussions creates artificial boundaries. Performance evaluation becomes something that happens to engineers rather than with them. The best systems integrate continuous feedback loops so formal reviews are simply documentation of conversations that already happened. When reviews contain surprises, the performance management process has already failed.

Annual review cycles force awkward aggregation across projects with different lifecycles. An engineer who spent Q1 on research, Q2 ramping on a new codebase, Q3 shipping features, and Q4 on incident response has experienced four different work contexts. Pretending this is one coherent performance period creates evaluation fiction. Performance systems need flexibility to assess work in its actual context.

Technology performance systems are shifting toward real-time feedback, skills-based development, and AI-enhanced insights that match the pace and complexity of modern software development.

AI is automating routine tasks like goal-setting and review drafting, while acting as virtual coaches to deliver tailored advice, potentially signaling the end of annual reviews. In IT/tech, this enables personalized "unit of one" motivation strategies, freeing managers for high-impact mentoring.

SHRM predicts AI-driven coaching will transform organization-wide performance by enhancing efficiency, though early adoption shows mixed results in employee output.

Tech teams thrive on iteration, so performance management is moving toward real-time, agile check-ins via digital tools and pulse surveys. This replaces cumbersome yearly evaluations with frequent, informal dialogues that align with sprints and project cycles.

According to Cornell University's research, traditional systems motivate only 1 in 5 employees, prompting a surge in shorter feedback cycles and co-created goals to boost development in fast-paced tech environments.

Beyond productivity, emphasis is on holistic "human performance," factoring in wellbeing, trust, and personal drivers, to counter burnout in high-stakes tech roles. This involves redesigning work for psychological safety and using AI to measure non-traditional metrics.

Deloitte warns that reinventing processes alone won't unlock potential; instead, tapping individual motivation could yield up to 15% higher financial performance in strong-management cultures.

Shifting from tenure-based to skills-focused evaluations, tech performance plans now link to individualized development paths (IDPs) for emerging needs like AI ethics or cloud security, using 360-degree feedback for comprehensive views.

The US Office of Personnel Management (OPM)’s best practices advocate ongoing, collaborative IDPs with tailored learning, while Cornell highlights agile trends like dynamic goals to address tech's rapid skill evolution amid economic pressures.

As remote and hybrid models become permanent, performance management now integrates wellbeing metrics, frequent recognition, and bias-minimized evaluations. These tools are essential to sustain engagement across distributed technical teams.

Current data highlights the shift in employee expectations: according to Gallup, 60% of employees in remote-capable roles prefer a hybrid arrangement. Modern platforms address this preference by providing visibility into progress without the need for physical oversight, ensuring that performance is measured by output rather than office presence.

Technology companies need performance management solutions that integrate with their existing workflows and support the unique requirements of engineering teams. Teamflect addresses these challenges through Microsoft Teams integration and flexible performance frameworks.

Technology organizations face performance obstacles that generic HR software struggles to solve. Teamflect's approach tackles these directly through features built for fast-moving technical environments.

"Teamflect is more than a tool. It is an enabler. It empowers us to keep our people at the core of everything we do."

Loredana Albenzio - Head of Talent and Learning, Securitas Europe

Securitas, a global security leader, needed to digitalize people processes for 120,000 employees across 20 countries while maintaining a focus on individual growth.

Read the full customer story →

or

See how Teamflect helps enterprise organizations build modern performance management.

"It's helped create a lot more consistency. Teamflect really provided us with that one-stop-shop experience."

Patrick Rankin - Director of Talent, K2 Services

K2 Services, an IT provider for law firms, required a performance platform that could scale without increasing administrative work.

Read the full customer story →

"The integration with my Microsoft Teams meetings makes my life so much easier. It's a one-stop shop.

Charmaine Loratet - Chief People Officer, Altis Consulting

Altis, a data analytics firm operating across three countries, needed a flexible platform to match their unique technical workflows.

Read the full customer story →

Base evaluations on documented outcomes and specific technical contributions rather than subjective impressions of who works hardest. Use multi-source feedback from team members, cross-functional partners, and project stakeholders to capture the full scope of someone's impact.

Track contributions continuously throughout the quarter rather than relying on memory during formal reviews, which prevents recency bias and ensures consistent standards across remote and in-office team members.

Keep dated records of one-on-one conversations noting specific project outcomes, technical decisions, and skill development progress. Document both exemplary work and areas needing improvement as they occur, including examples like particular pull requests, architectural proposals, or incident responses.

Track completed certifications, new technologies learned, and mentorship provided. This ongoing documentation provides concrete examples during reviews and helps developers understand exactly which behaviors to continue or adjust.

Conduct calibration sessions at least quarterly to ensure rating consistency across teams and technical disciplines. Because technology roles vary significantly, engineering managers should review ratings together to verify that "meets expectations" for a frontend developer carries the same meaning as the same rating for a DevOps engineer. Regular calibration prevents rating inflation, catches unconscious bias, and ensures that performance standards remain consistent across remote and distributed teams.

Address performance gaps immediately through specific, technical conversations that identify root causes. Distinguish between skill deficits that training can fix, resource constraints that management must solve, or fundamental fit issues.

Create focused improvement plans with measurable technical milestones, such as completing specific features with quality standards or improving code review turnaround times. Provide necessary support through pairing sessions, training resources, or mentorship while documenting all interventions.

The primary challenge is maintaining visibility across distributed teams and asynchronous work patterns. Engineering managers often oversee developers across multiple time zones, making real-time observation difficult.

Other challenges include evaluating collaborative contributions when most work is team-based, keeping competency models current as technology stacks change, and balancing technical depth assessment with soft skills evaluation. Performance management software with Microsoft Teams integration helps address these constraints by bringing feedback into the daily workflow.

Create high-performing and engaged teams - even when people are remote - with our easy-to-use toolkit built for Microsoft Teams